AI Video Model News: Latest Updates & Breakthroughs in 2026

Stay ahead with AI video model news covering the latest updates, breakthroughs, tools, and trends shaping video creation and content workflows in 2026.

AI video model news in 2026 is getting harder to ignore, especially if you’ve been trying to keep up with how fast things are changing. Just a few months ago, Sora from OpenAI was leading most conversations, but now Kling from Kuaishou, Veo from Google DeepMind, and updates from Runway ML are shifting the space in different directions. At the same time, platforms like Frameo are making these models easier to actually use for real content, not just experiments. It’s starting to feel less like “which tool is best” and more like “which model is changing the game right now,” and that’s exactly what this breaks down.

This article breaks down the latest AI video model updates, key breakthroughs, major players, and what these changes actually mean for creators and content workflows in 2026.

Key Takeaways

- The AI video model landscape in 2026 is evolving rapidly, with frequent updates, new capabilities, and shifting priorities around quality, cost, and usability.

- A major shift is visible from standalone video generation to full production workflows, where platforms like Frameo focus on consistency, structured storytelling, and end-to-end content creation.

- Core capabilities defining top models now include native audio-video generation, character consistency, motion control, and API-based scalability, making AI video more practical for real-world use cases.

- The market has clearly segmented into quality-first models (Veo), cost-efficient models (Kling), and ecosystem/workflow-driven platforms (Frameo), making selection dependent on use case rather than a single “best” model.

- Vertical video and short-form content are becoming dominant, with nearly half of AI-generated videos optimized for 9:16 formats, reflecting mobile-first consumption and social media-driven demand.

What’s Happening Right Now: AI Video Model News

The last few weeks have brought some of the biggest shifts in AI video so far, from new models climbing global rankings to major platforms shutting down and others introducing real production-level control. These developments are shaping which models creators choose and how video content is being produced right now.

Alibaba's HappyHorse-1.0 Tops Global Video Generation Leaderboards

As of April 10, 2026, HappyHorse-1.0 by Alibaba ranks among the top AI video models globally on Artificial Analysis benchmarks.

- April 10, 2026 — Alibaba officially enters the top tier of AI video generation with HappyHorse-1.0, outperforming several existing models in realism and motion consistency

- Benchmarked on platforms like Artificial Analysis Arena, the model shows strong performance in temporal coherence and scene stability

- Signals a major shift as Chinese AI companies continue to compete aggressively with Western models

- Adds pressure on models like Veo, Kling, and Runway to improve output quality and cost efficiency

Sora Is Shutting Down: What It Means for Creators & What to Use Instead

OpenAI will shut down Sora access starting April 26, 2026 (app) and September 24, 2026 (API), marking a major shift in the AI video landscape.

- April 2026 — Sora, once one of the most anticipated AI video models, begins phased shutdown

- App access ends on April 26, while API access continues until September 24

- Creates immediate demand for Sora alternatives in 2026, including Kling, Veo, and Runway models

- Pushes creators toward platforms that offer complete workflows instead of standalone models

- Platforms like Frameo are being explored as part of this shift, especially for turning scripts into consistent narrative videos

Kling 3.0's Motion Control Update Changes How AI Video Works

Released on March 8, 2026, Kling 3.0 by Kuaishou introduces advanced motion control features, including 6-axis camera movement and object path drawing.

- March 8, 2026 — Kling 3.0 update introduces precise motion control, a major limitation in earlier AI video models

- Features include:

- 6-axis camera control for cinematic movements

- Object path drawing to guide motion within scenes

- Enables creators to move beyond static or unpredictable outputs toward directed storytelling

- Positions Kling as one of the most creator-focused AI video models in 2026

Also read: How to Create Vertical Videos for Social Media

The Complete AI Video Model Comparison: 2026

Choosing the right AI video model in 2026 depends less on hype and more on what actually fits your workflow, quality, control, cost, or storytelling. With multiple strong contenders emerging, a clear comparison helps cut through the noise and understand where each model stands today.

Here’s a side-by-side breakdown of the most important AI video models right now.

AI Video Model Comparison Table (2026)

Model | Resolution | Audio | Price / Access | Best For |

|---|---|---|---|---|

Kling 3.0 | Native 4K | No native audio | From $79/month | Best overall value, motion control |

Veo 3.1 | 4K @ 60fps | Native synchronized audio | From $2.49 /Monthly | Cinematic quality, realism |

Runway Gen-4.5 | High fidelity (4K capable) | No native audio | From $12/ Month | Professional filmmaking pipelines |

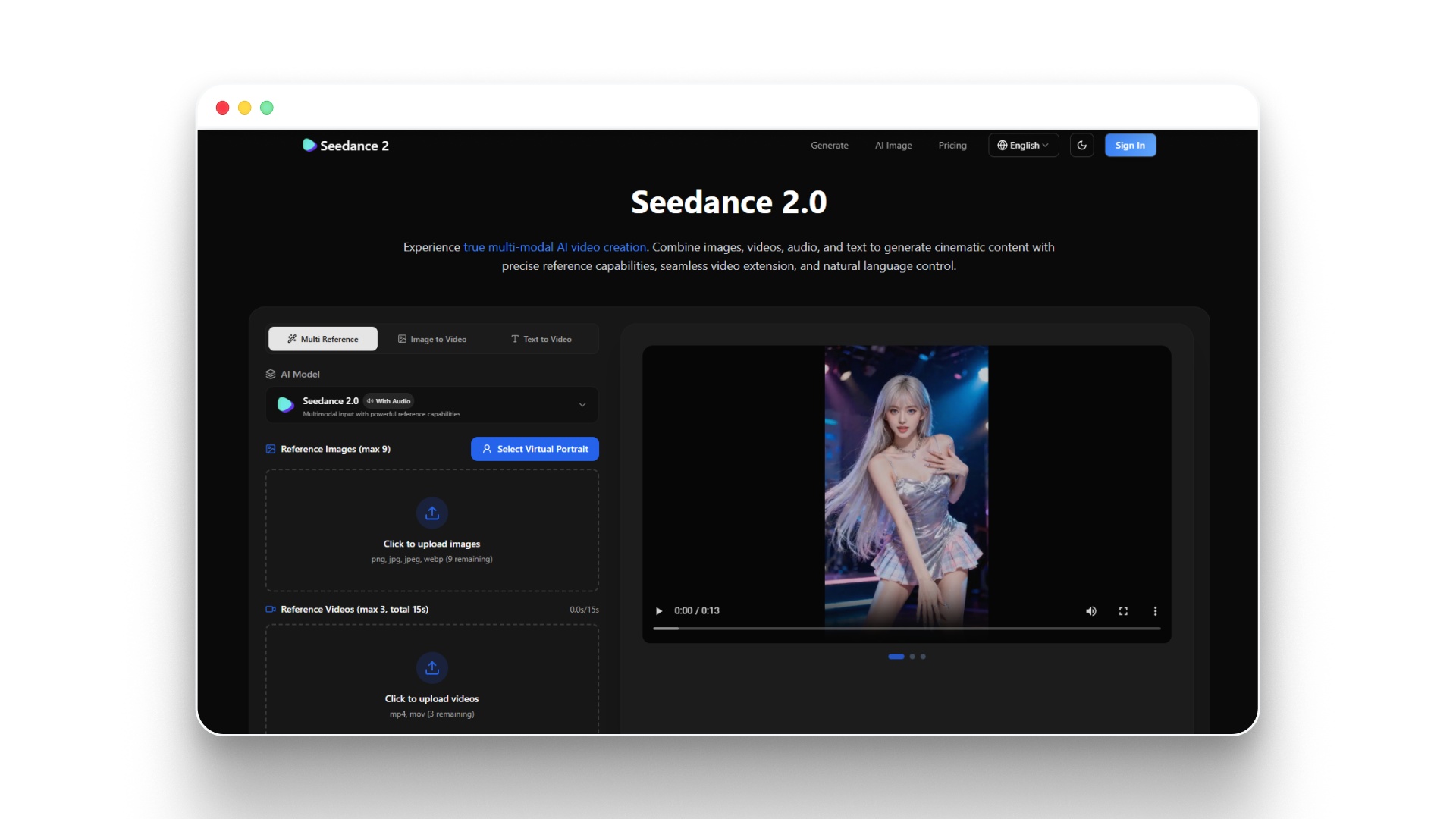

Seedance 2.0 | High-quality HD/4K | Native audio-video generation | From $14.90/month | Unified audio-visual generation |

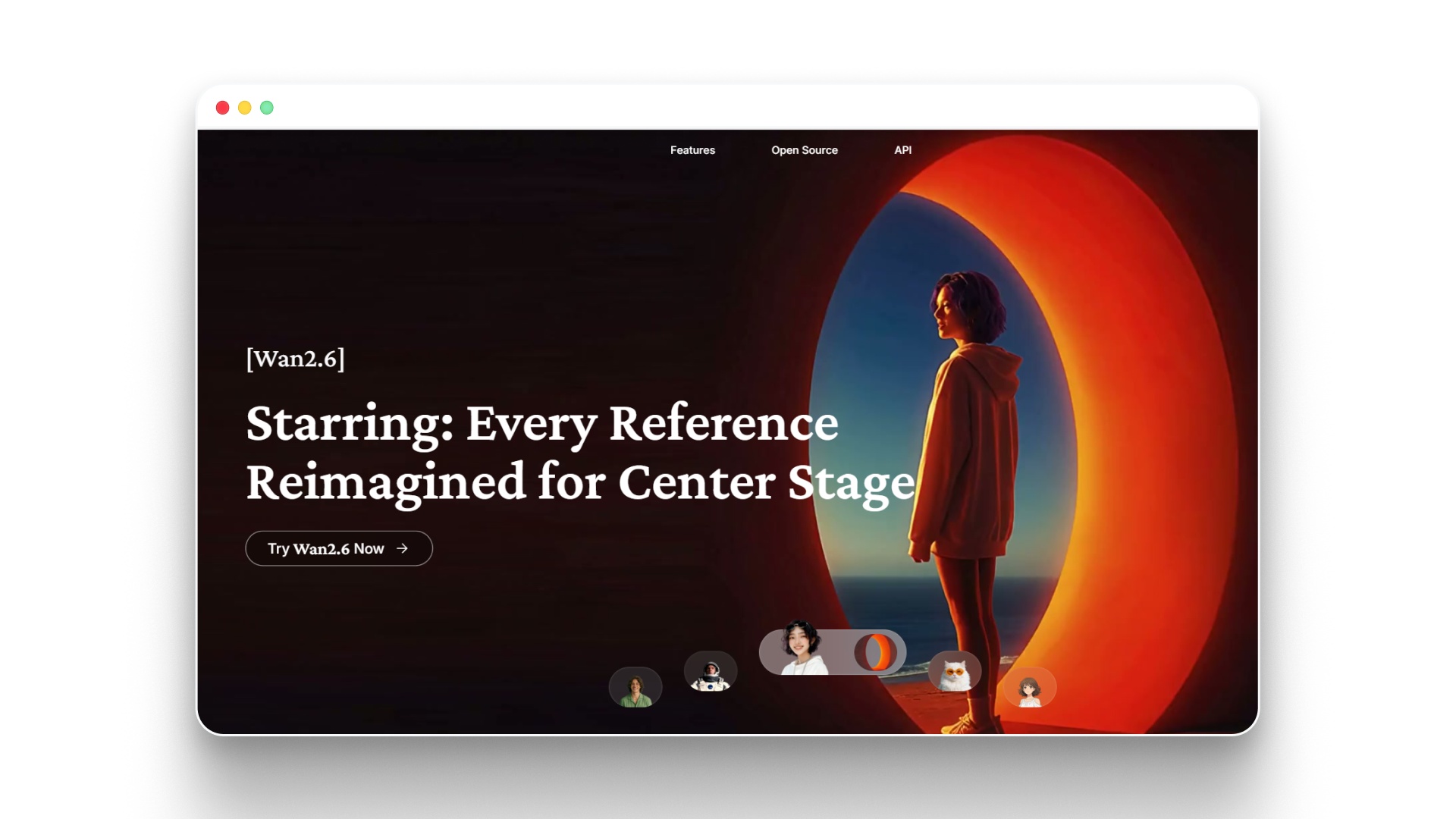

Wan 2.6 | Varies (open-source) | Depends on setup | Free and from $5/month | Developers, scalable pipelines |

Frameo (Workflow Layer) | Depends on underlying models | Full audio + voice support | Free version available | Story-driven video creation |

Each of these models solves a different part of the video creation process, which is why choosing the “best” one depends on what you’re actually trying to create.

2026 AI Video Model Landscape: Who’s Leading & Why It Matters

The 2026 AI video model space is about who is leading in quality, control, cost, and real-world usability. Each major model is now competing on a specific strength, which makes understanding their positioning far more useful than tracking release dates.

Here’s how the current leaders actually stack up and where each one is winning.

1.Frameo

- In 2026, platforms like Frameo represent a shift from standalone AI models to structured, story-driven video generation workflows.Focuses on script → storyboard → video pipeline, rather than isolated clip generationMaintains character consistency and narrative continuity across scenes, a known limitation in many modelsCombines multiple capabilities such as script writing, visual generation, and editing within a single systemReflects a broader trend where creators prioritize usable storytelling outputs over raw generation capability

2.Kling 3.0

Kling AI: Next-Generation AI Creative Studio

- As of 2026, Kling 3.0 by Kuaishou provides native 4K output along with motion control features at a relatively low subscription cost.Supports native 4K video generation with extended clip durationsIncludes 6-axis camera control and object path guidanceOffers multi-shot storyboard capabilitiesPositioned as a cost-efficient option with creator-oriented controls

3.Google Veo 3.1

- Google DeepMind Veo 3.1 delivers 4K video at 60fps with synchronized audio and strong performance in benchmark evaluations.Generates video with integrated audio (dialogue and ambient sound)Supports high frame rate output with realistic motion simulationAPI pricing adjustments in April 2026 improved accessibilityTypically used for high-quality cinematic outputs

4.Runway Gen-4.5

Runway Research | Introducing Runway Gen-4.5

Runway ML Gen-4.5 ranks highly on Artificial Analysis benchmarks and is commonly used in professional workflows.

- Known for strong visual fidelity in generated outputs

- Integrates well into existing creative and editing pipelines

- Does not include native audio generation

- Available through a subscription-based pricing model

5.Seedance 2.0

Seedance 2.0, released in January 2026, uses a unified architecture to generate audio and video simultaneously.

- Produces synchronized audio and visuals in a single generation step

- Maintains motion consistency across scenes

- Reduces reliance on external audio processing

- Suitable for rapid content generation workflows

6.Alibaba Wan 2.6

Wan AI: Leading AI Video Generation Model

Alibaba Wan 2.6 provides an open-source framework for teams building custom AI video pipelines.

- Free to use with customizable deployment options

- Supports large-scale generation through API integration

- Requires technical setup and infrastructure management

- Often used in development-heavy environments

Also read: 12 AI Tools for Music Production in 2026: Features, Pricing, and Use Cases

The 5 Biggest Trends Reshaping AI Video in 2026

If you’ve been following AI video even casually, 2026 probably feels very different from last year. It’s no longer about “what can AI generate,” but more about how usable, consistent, and scalable that output actually is.

Here are the shifts that are quietly changing how creators and teams work with AI video right now.

Trend 1: Native Audio-Video Generation Is Now the Baseline

As of 2026, 4 out of 6 major AI video models generate synchronized audio, compared to none in early 2025.

- Not long ago, most AI videos were silent by default

- Audio had to be added separately

- Workflows felt fragmented

- Now, models are starting to generate:

- Dialogue

- Ambient sound

- Scene-aware audio

- This changes how videos are created

- Fewer tools involved

- Faster turnaround

- More complete outputs from a single step

It’s becoming normal to expect a video to “come with sound,” not just visuals.

Trend 2: Character & Scene Consistency Is No Longer Optional

Maintaining the same character, look, and environment across scenes is now expected for most professional use cases.

- Earlier outputs often had issues like:

- Characters changing appearance

- Scenes losing visual continuity

- That’s no longer acceptable for:

- Storytelling

- Branded content

- Episodic videos

- Newer systems are focusing more on:

- Consistent characters

- Stable environments

- Predictable scene flow

- Platforms like Frameo are structured around this idea from the start

The shift is simple: clips are not enough, stories need consistency.

Trend 3: AI Video Is Becoming Part of Software

AI video generation is increasingly being used as an API layer inside products, not just standalone tools.

- Instead of opening a tool manually, a video is now being generated inside:

- marketing platforms

- ecommerce systems

- content automation workflows

- This means:

- Video creation happens in the background

- Scaling becomes easier

- Teams don’t rely on manual production

- Companies like Alibaba and Google DeepMind are pushing this direction

Video is slowly becoming a feature, not a separate process.

Trend 4: The Market Is Splitting Into Clear Categories

AI video models in 2026 are no longer competing in one category; they’re splitting into distinct tiers based on purpose.

- Some models focus on:

- realism and cinematic quality

- high-end output

- Others focus on:

- affordability

- faster generation

- And some platforms focus on:

- usability

- storytelling workflows

- For example, tools like Frameo focus more on structured creation rather than raw generation

So the question is no longer “which model is best,” but “best for what?”

Trend 5: Vertical Video Is Quietly Becoming the Default

Most of the AI-generated videos are created in 9:16 format, and that number is still growing.

- Most content is now consumed on:

- phones

- short-form platforms

- So naturally, creators are shifting toward:

- vertical framing

- shorter storytelling formats

- AI tools are adapting to this by:

- prioritizing vertical outputs

- optimizing for reels, shorts, and TikTok

Landscape video isn’t gone, but it’s no longer the default starting point.

If you step back, all of these trends point to one thing:

AI video is moving from experimentation to real, everyday production workflows.

Also read: Sora 2 vs Veo 3 vs Wan 2.5 Feature Comparison

What to Watch Next: AI Video Model News to Expect in Q2–Q3 2026

The next few months are likely to be less about surprise launches and more about consolidation, scaling, and ecosystem expansion. Most major players have already established their core capabilities, so the focus is shifting toward accessibility, integration, and competitive positioning.

Here’s what is likely to shape AI video model news through Q2 and Q3 of 2026.

Meta’s Entry Into AI Video Is Expected to Accelerate

Meta Platforms is expected to expand its AI video capabilities, building on its existing generative AI and social media ecosystem.

- Likely focus areas include:

- integration with Instagram and Facebook video workflows

- short-form and vertical video generation

- creator monetization tools

- Meta’s advantage comes from:

- direct access to distribution platforms

- large-scale user data for training and optimization

If released, Meta’s model could prioritize social-first video creation rather than standalone generation quality.

Stability AI Is Likely to Push Open Models Further

Stability AI is expected to continue expanding open-source video models, building on its previous work in image and video generation.

- Focus areas may include:

- open-weight video models

- developer-friendly customization

- integration with existing creative tools

- This could lead to:

- more accessible alternatives to closed models

- increased experimentation across developer communities

The open-source segment is likely to grow alongside enterprise-grade models.

Chinese AI Labs Are Expanding Rapidly

Companies like Alibaba and Kuaishou are expected to accelerate model releases and improvements through 2026.

- Continued focus on:

- cost efficiency

- faster generation speeds

- competitive feature rollouts

- Recent momentum suggests:

- more frequent updates

- stronger competition with Western models

- expansion into global markets

The competitive gap between regions is narrowing quickly.

AI Video Will Become More Workflow-Centric

The next phase of AI video development is expected to move beyond generation and toward complete content workflows.

- Increased focus on:

- scripting + generation + editing in one system

- consistency across scenes and episodes

- production-ready outputs

- Platforms like Frameo reflect this direction by combining multiple steps into a single workflow

The shift is toward making AI video usable for end-to-end storytelling, not just clip creation.

Pricing and Access Models Will Continue to Shift

Pricing competition is expected to intensify as more models enter the market and API access expands.

- Likely changes include:

- lower cost per second of video generation

- more subscription-based access models

- expanded API availability for developers

- This could result in:

- broader adoption across industries

- more experimentation by smaller teams

Cost and accessibility will play a bigger role in model adoption decisions.

Looking ahead, the next phase of AI video is less about whether the technology works and more about how widely and efficiently it can be used across different workflows and platforms.

Also read: Best Text-to-Video Local Model for AI Video Creation For 2026

Build Consistent, Scalable AI Video with Frameo

Keeping up with rapid changes in AI video models is one challenge; turning those capabilities into consistent, production-ready content is another. This is where Frameo fits into the workflow, focusing on precision, consistency, and scale for modern creators.

Frameo is designed as a professional video production system, not just a generation tool, bringing together scripting, visual creation, editing, and final output into one unified environment.

What Makes Frameo Different

- End-to-End Content Creation

- Generate images, videos, and audio, then assemble everything on a single timeline

- No need to switch between multiple tools or break the workflow

- Chat-Native, Workflow-Driven System

- Combine conversational creation with structured pipelines

- Turn raw ideas, images, or clips into fully animated, polished videos

- Professional-Grade Control

- Control characters, styles, camera, lighting, voice, and formats

- Built for commercial content, not just experimental outputs

Built for Consistency at Scale

- Maintain continuity across:

- scenes

- episodes

- campaigns

- entire content libraries

- Edit assets directly without restarting generation

- adjust characters, expressions, lighting, pacing

- refine outputs at a granular level

- Reuse elements across projects

- characters

- styles

- voices

- production pipelines

Designed for Real Production Workflows

- Supports configurable production pipelines

- structured creation → review → iteration → final output

- Includes a professional timeline for assembling and refining content

- Enables batch generation for high-volume content needs

- Handles multiple formats and aspect ratios for different platforms

Who It’s Built For

- Storytellers and creators producing narrative content

- Studios and agencies working on campaigns or series

- Digital marketers creating ads, UGC videos, and social content

- Teams building training videos, product visuals, or branded media

Frameo is not built around one-off prompts; it is designed for repeatable, directed, and scalable video production where consistency and control matter.

If you’re working with AI video models and need a way to turn outputs into structured, high-quality content, Frameo provides a workflow that supports that process end to end.

Wrapping Up

AI video in 2026 is moving quickly, with new models, updates, and capabilities shaping how content gets created across platforms. What’s becoming clearer is how these tools are being used in real workflows, especially when consistency, speed, and scalability are involved.

For creators and teams, the focus is shifting toward building repeatable systems that can produce high-quality content without starting from scratch every time. The combination of model capability and structured workflows is what makes AI video practical for ongoing production.

If you want a structured way to turn these AI video capabilities into consistent, story-driven content, Try Frameo to start building your videos faster.

FAQ

1.What is the best AI video model right now?

There isn’t a single “best” AI video model in 2026, as each model serves different needs. Google DeepMind Veo 3.1 is often used for high-end cinematic output, while Kling 3.0 from Kuaishou offers strong control at a lower cost. The right choice depends on quality, budget, and workflow requirements.

2.Is Sora really shutting down?

Yes, OpenAI has announced a phased shutdown of Sora in 2026. App access is expected to end around April 26, while API access continues until September 24. This has led many creators to explore alternatives such as Kling, Veo, and other emerging AI video models.

3.What happened to OpenAI Sora in 2026?

In 2026, OpenAI shifted its focus away from Sora, leading to its gradual shutdown. While Sora initially attracted attention for realistic video generation, newer models and competitive pressure changed the landscape. The transition reflects how quickly AI video development is evolving across companies and regions.

4.What is Kling 3.0, and how is it different?

Kling 3.0 is an AI video model developed by Kuaishou that focuses on motion control and affordability. It supports features like 6-axis camera movement and object path guidance, allowing more precise scene control. Compared to earlier models, it offers longer clips and better usability for structured video creation.

5.Who is winning the AI video model race in 2026?

The AI video model space in 2026 is competitive, with no single clear winner. Google DeepMind leads in cinematic quality with Veo, while Kuaishou is strong in cost-efficient models like Kling. Meanwhile, companies like Alibaba are rapidly improving performance and expanding globally.

6.How much does AI video generation cost in 2026?

AI video generation costs in 2026 vary widely depending on the model and access method. Subscription-based tools can start around $6–$30 per month, while API pricing is often calculated per second of generated video. Overall costs have decreased significantly, making AI video more accessible to creators, teams, and businesses.